Nearly nine months after artificial intelligence software like ChatGPT burst into mainstream conversation, the college is continuing to provide faculty and students guidance on how they can – and shouldn’t – incorporate the technology into their work.

What you need to know: There are no college-wide blanket policies around AI. Greg Foster-Rice, associate provost for Student Retention Initiatives and chair of Columbia’s AI task force, said the college wants faculty and students to lean into AI, but approach with some level of caution.

“How we respond to AI is all very much a work in progress as the technology is evolving and faculty are responsible for talking about it with students, just as students shouldn’t hesitate to ask their faculty for guidance on how AI may or may not be allowed within each classroom setting,” Foster-Rice told the Chronicle.

What hasn’t changed: Using AI when unauthorized or without properly citing it is not permitted. Despite this, many professors have added an AI clause into their syllabi for students to reference.

Senior Vice President and Provost Marcella David encouraged faculty and staff to continue referencing the college’s Academic Integrity policy on how they can oversee the use of AI via a college-wide email on Sept. 6.

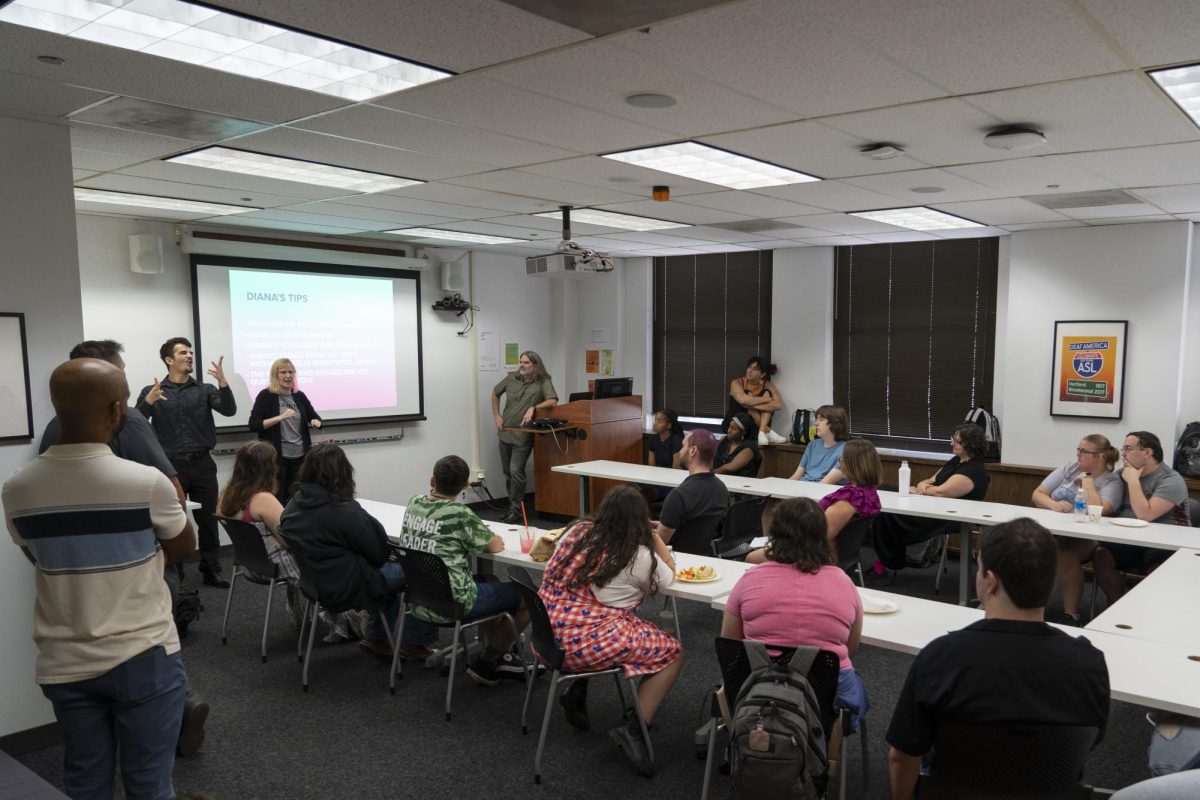

What’s new: The college AI task force – consisting of faculty, staff and students –worked through the spring semester to launch a comprehensive AI resource page that breaks down what the technology is, how to use it and what the college’s current policy is.

The college’s learning management system also now has a “Canvas Commons” page where faculty can go for various academic resources, including AI ethics, academic integrity and training around AI biases and legal issues.

What’s next: The AI task force will meet once per semester and will be discussed at the college’s faculty development in January.

Within that, the page breaks down the technical, ethical and philosophical pillars of AI, to provide students, faculty and staff a fuller look into what AI can and shouldn’t be used to do.

Zooming out: People – in and outside academia – have been using AI for years without necessarily realizing it, Foster-Rice said, citing technology like Grammarly, coding or predictive text in smartphones.

“It’s important to fundamentally understand what AI can do and how we can use it responsibly,” Foster-Rice said.

What students are saying: Angely Camacho, a sophomore music major, said her professors brought up how to use AI in their classes during the first week of classes and said she likes that the college does not have a one-size-fits-all policy.

“I think it is better than having one policy by the book for every class because every course is unique,” Camacho said.

Nayeli Narez, a first-year interior architecture major, said in some of her classes that she was given the option to use AI and sees why the school does not have a blanket AI policy in place.

“I understand both sides of why there wouldn’t be a general policy and why there could be,” Narez said.